Disinformation moves from fringe sites to Facebook, YouTube

Report: Extremists promoting conspiracies are using same tactics as foreign actors

Lawmakers and regulators focusing their attention on Facebook, Twitter and YouTube for the platforms’ role in propagating disinformation may be missing a big chunk of other online sites and portals that drive conspiracies and outright falsehoods, according to a nonprofit group that is studying how disinformation works.

Sites and discussion portals such as 4chan, 8chan, Reddit and Gab, as well as smaller social media sites such as Pinterest and even payment sites such as PayPal and GoFundMe, and online retailers such as Amazon and others are all part of a large online ecosystem that helps domestic and foreign agents shape disinformation and launch adversarial campaigns, the Global Disinformation Index said in a report released last week.

[8chan draws top Republican’s ire following El Paso massacre]

The group is funded by USAID, the United Kingdom, and philanthropic entities.

“Disinformation agents, both domestic and foreign, have a large library of content to draw from in crafting new adversarial narratives,” the group’s report, titled “Adversarial Narratives: A New Model for Disinformation,” said. “In practice this means less overtly fabricated pieces of content” and more material that draws on bits and pieces of factual information that is overlaid with conspiracy and disinformation.

Whether it is large nation states such as Russia using influence campaigns to sway American voters or extremist groups promoting conspiracies about vaccines, immigrants and political candidates, they are all using similar tactics, according to the report.

“We need to talk about not just social media platforms but other elements including payment portals, everything from PayPal, Etsy and crowdfunding sites, and other secondary platforms in which violent extremist organizations that are moved off Google can still fundraise,” said Ben Decker, author of the report.

[jwp-video n=”1″]

For example, followers of QAnon, a far-right conspiracy group that believes President Donald Trump is waging a secret battle against so-called deep state actors engaged in a global child sex trafficking ring, “regularly consume content across anonymized message boards like 4chan, 8chan, Voat, and Reddit,” the report said. But QAnon followers, the report added, also “post content to more open platforms like Facebook, Twitter, YouTube, and Instagram” and sell their QAnon themed merchandise on eBay, Etsy and Amazon.

Eventually conspiracy theories and disinformation bubble up and are repeated by more mainstream news outlets such as Fox News, the report noted. The FBI is said to have labeled QAnon as a domestic terror group.

Some of the fringe sites, including 8chan where police believe last week’s shooter in El Paso posted an anti-immigrant manifesto, predate Google and Facebook, Decker said. 4chan, another discussion board where conspiracies flourish, was set up in 2003, a few years before Facebook was launched. Stormfront, a neo-Nazi, Holocaust-denying site, was launched in 1996, two years before Google, he said.

After the El Paso shooting, the company that was hosting 8chan took the site offline, but many experts believe that the site will reappear elsewhere as has happened with similar sites in the past.

The anti-5G campaign

Disinformation and influence campaigns also don’t have to be violent.

The Disinformation Index report looked into how a vast network of actors around the world have promoted an anti-5G narrative, suggesting that the next generation of mobile communication technology could not only cause serious health risks to users but could damage the environment and undermine national security.

The narrative began soon after June 2016, when Tom Wheeler, then chairman of the Federal Communication Commission, in a speech in Washington welcomed 5G networks and said, “Turning innovators loose is far preferable to expecting committees and regulators to define the future.”

Within two weeks of that speech, a group with 9,300 YouTube followers called InPower Movement, uploaded a video titled “The Truth about 5G” that raised the question of whether there was a “clandestine force working behind the scenes in the United States, censoring the truth about 5G rollout?”

By early 2017 another group uploaded a video on YouTube titled “The New Wireless 5G is Lethal.”

By April that year, fabricated quotes attributed to Wheeler saying there was no time to study the health implications of 5G appeared on Twitter with the hashtag #Stop5G.

In late 2017, Max Igan, a conspiracy theorist who appears as a political commentator on Iranian state-backed Press TV, posted a YouTube video on 5G alleging that 5G was part of a “new world order” and the technology would usher in Matrix-like alternative realities, the report said. Igan then uses such conspiracies to raise money on sites like Patreon, which allows artists and other content creators to raise money from followers, the report said.

By early 2018, the #Stop5G narrative had migrated to other platforms such as Reddit and then on to Facebook, where 200 pages and groups were devoted to the topic, according to the Disinformation Index report.

In May 2018, RT, the Kremlin-backed propaganda TV outlet, broadcast the first of eight news segments on 5G raising questions about the cancer risk posed by the technologies. Headlines on RT called 5G a “dangerous experiment on humanity,” “a crime under international law,” and “Totally Insane: Telecom Industry Ignores 5G Dangers.”

The RT coverage shows how “countries like Russia can pick up on existing disinformation campaigns in an attempt to sow social discord and increase the perception of popularity of certain concepts or sentiments,” the Disinformation Index report said.

A full year later, in May 2019, Fox News Host Tucker Carlson repeated some of the unproven claims and questions, asking “are 5G networks medically safe?”

Other disinformation campaigns about the supposed health risks of vaccines and rumors about certain presidential campaigns take similar paths, Decker said.

The presidential candidacy of Sen. Kamala Harris, Democrat of California, has suffered from a similar trajectory of disinformation, Decker noted.

The fact that Harris’s mother was born in India and her father was born in Jamaica is twisted to make the claim that Harris herself is not an American citizen, or that she’s not an African-American. Harris was born in Oakland, California.

Such claims and rumors start on 4chan or Reddit, and academic research has shown that content from those sites eventually percolate up to Facebook and YouTube, Decker said.

Content moderators on social media sites are then confronted with thorny questions on whether bad information on a candidate is a smear campaign that is typical to politics or a more serious violation of the platform’s standards, Decker said.

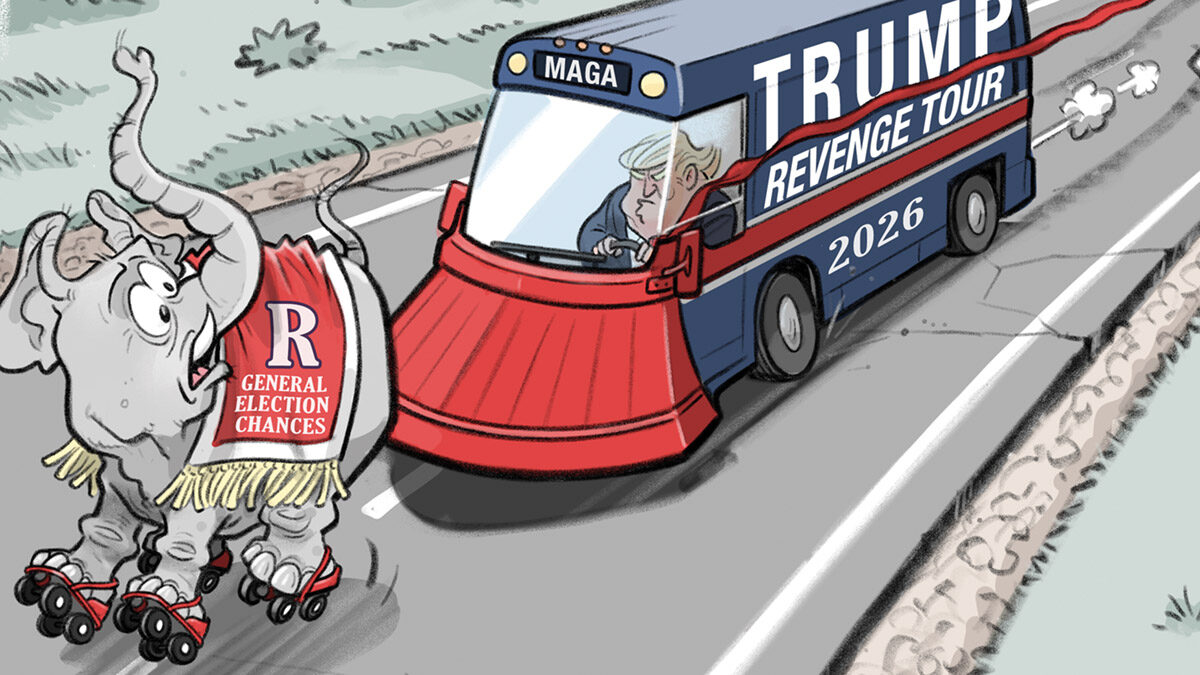

When social media platforms remove conspiracy theories and related posts, Trump and members of Congress accuse them of smothering conservative voices, putting pressure on the platforms to potentially allow such conspiratorial voices to continue on those sites, Decker said. “It creates a dangerous precedent.”

[jwp-video n=”2″]